The Basic Anatomy Of A Horizon Blast Session

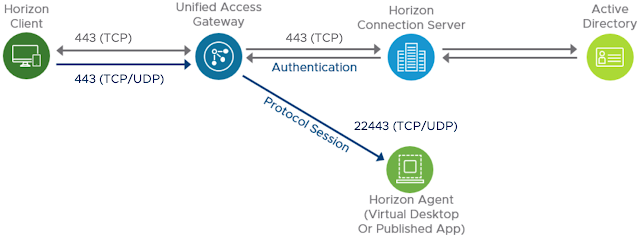

Typically, the primary protocol is completely over 443 between the Horizon client and UAG appliance, as well as between the UAG appliance and Horizon Connection server. For the secondary protocol, Blast Extreme in this example, traffic flows over 8443 between the client and the UAG appliance. Then, from the UAG appliance to the virtual desktop or RDS host, traffic flows over 22443.

In the context of optimizing Blast for your environment, one of the first questions to ask about your Blast traffic is whether UDP or TCP is used for the transport protocol. For most use cases UDP is more ideal and is what the Blast protocol first attempts to leverage by default. Accordingly, confirming that UDP is actually in use for your environment is a first step towards achieving an optimal Blast experience.

Observing The Transport Protocol In Use

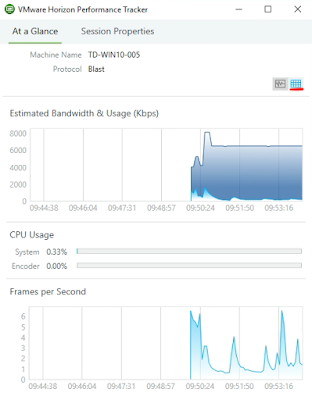

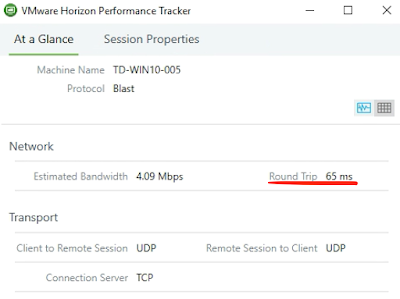

While you can look at Blast logs to determine what transport type is in use, the Horizon Performance Tracker offers a really, really, really easy and convenient way to determine this info. While not installed by default, Horizon Performance Tracker is built right into the Horizon agent and is offered as an optional component during the agent install. (Here's more official guidance on installing Horizon Performance Tracker.) Once installed, from within an active session launch Horizon Performance Tracker from your start menu. When it's launched you're presented the, "At a Glance," tab. While this initial screen is certainly interesting in it's own right, things get particularly useful when you click on the icon with the grids in the right corner. (Underlined with red in the image below.)

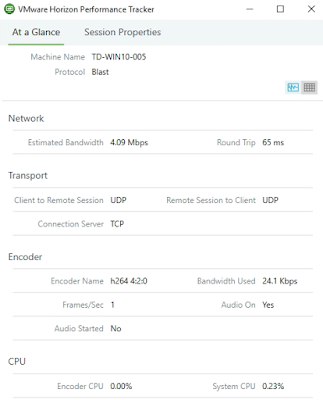

In the screenshot below, under the transport section, there's confirmation that UDP is leveraged for the transport protocol in both directions, the default behavior that the Blast strives for.

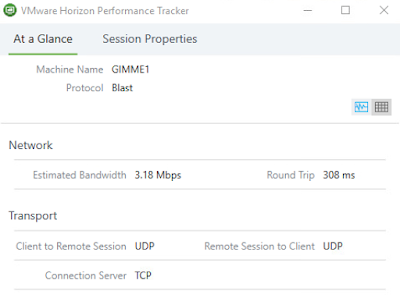

If UDP were being blocked for some reason, you'd see something like this:

Again, for most uses cases, UDP is the optimal transport, with the optimization guide stating that with but two exceptions, "VMware recommends that you use UDP for the best user experience. And if Blast Extreme encounters problems making its initial connection over UDP, it will automatically switch and use TCP for the session instead." Accordingly, in most scenarios, if you see TCP in use as a transport protocol, something has gone wrong and tuning Blast involves making adjustments to ensure UDP is leveraged instead. Your first step is to determine if there's issues with UDP port connectivity for 8443 or 22443 along your Horizon session's network path. (I've provided guidance on this process in a previous post, Troubleshooting Port Connectivity For Horizon's Unified Access Gateway 3.2 Using Curl And Tcpdump.) If you find that UDP traffic is getting blocked while traversing a foreign network outside of your control, you can try and stack the deck in your favor by leveraging port 443 for external Blast traffic on your UAG appliance.

Shifting External Blast Traffic To Port 443 On UAG

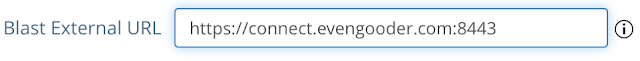

Shifting Blast traffic to 443 on your UAG appliance is a relatively simple process. First, navigate to Horizon Edge services on the UAG appliance. Here's what it looks like when the Blast External URL is configured for port 8443:

To change it to 443, simply append 443 instead of 8443 to the configured URL:

When configuring the Blast External URL, I like to imagine I'm sitting inside a Horizon endpoint client itself, looking for a path to forward Blast traffic too. Think in terms of what's externally resolvable and accessible from the perspective of the endpoint. Typically, it ends up being the VIP and associated DNS on a load balancer.

When To Use TCP For Your Transport Protocol

The optimization guide indicates that UDP is usually the optimal transport to leverage, with two exceptions. First, you'd want to go with TCP if, "Traffic must pass through a UDP-hostile network service or device such as a TCP-based SSL VPN, which re-packages UDP in TCP packets." Since the days of PCoIP dominance TCP-based SSL VPNs have always been a challenge for Horizon. The encapsulation of UDP traffic into TCP packet by such VPNs is a real downer, nullifying the performance benefits of UDP. For Blast traffic it's best to stick to TCP when using these types of devices or when there's some other network challenges preventing UDP use.

The second reason to go with TCP instead of UDP is when, "WAN circuits are experiencing very high latency (250 milliseconds and greater)" In regard to this 2nd consideration, Horizon Performance Tracker can again be of assistance. Round trip latency is prominently displayed under the network section in real-time.

In the above screen shot, with latency at 65ms it would seem that all is right with the world in terms of the transport selection of UDP. However, if we were witnessing some latency above 250ms, something like below, we'd want to consider forcing TCP usage.

With latency above 250 ms and low packet loss, the optimization guide is pretty clear in its guidance to leverage TCP for the transport protocol. However if packet loss were also high, the decision wouldn't be as straight forward. With Blast's UDP stack's better handling of packet loss than it's TCP stack, you might still want to stick with UDP as a transport protocol in a high latency situation. Fortunately the Horizon Help Desk Tool can provide insight into whether or not there's packet loss so we can make an informed decision.

Horizon Help Desk Tool

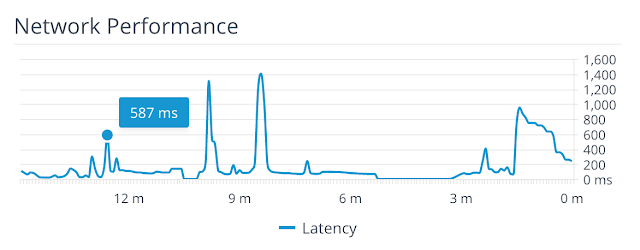

The Horizon Help Desk Tool offers an even more useful view of network latency for a particular Horizon session. It provides a breakdown of network latency for a specific session over the span of 15 minutes, given you a better overall sense of what latency is. Below is a graph cranked out by the tool for a particularly challenged Horizon session that spikes to latencies above 1200 ms, certainly not the most ideal of scenarios.

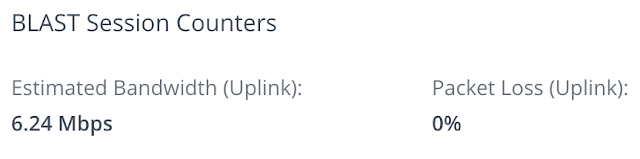

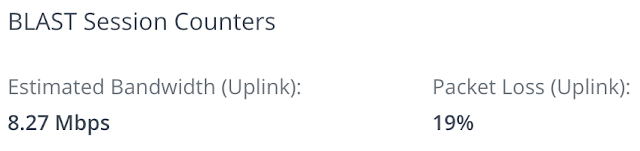

A further benefit of the tool is its ability to report on packet loss within a session which, as previously mentioned, is relevant in determining the optimal transport protocol. After looking up a user's session, from the details screen expand the user metrics session and under Blast counters you'll see the packet loss. For the session above, though there's high latency, there's no indication of packet loss.

With high latency and zero percent packet loss we have network conditions bettered accommodated by the TCP transport. However, had there been high packet loss, we'd have to make a choice between TCPs performance benefits in high latency environments versus the UDP stacks ability to better handle packet loss. To simulate such a situation in my lab I used a utility called clumsy on my remote endpoint. After configuring the utility to create significant packet loss the hit on network performance was clearly reflected through the Horizon Help Desk tool.

In this situation, where packet loss is high, UDP might be the preferred transport to stick with, despite the hight latency. Both the VMware Blast Extreme Optimization Guide and Blast Extreme Display Protocol In VMware Horizon 7 white paper indicate that UDP is the optimal transport to stick with under high packet loss conditions. The white paper specifically states that, "UDP is better at handling packet loss than TCP. UDP can deliver a good user experience in conditions of up to 20 percent packet loss."

Fun Facts About Codecs

The optimization guide states that, "A codec is a computer program that can encode or decode a digital data stream for transmission. The word codec is a blend of the words coder- decoder." As of today Blast offers a choice between three codecs, H.264, JPG/PNG and H.265, with H.264 being the default.

One of the H.264 codecs claims to fame is it's ability to handle rapidly changing content. Another major claim to fame is the ability to leverage the built in H.264 chip of endpoint devices for hardware based decoding, sparing the endpoint's CPUs the trouble. This both improves performance and extends the battery life of these endpoint devices. When NVIDIA grid cards are in the mix, things get even more exciting. The encoding of the codec can be offloaded to the NVIDIA GPU, improving performance and offloading the encoding from the server. This offloading in turn improves user density and efficiency on the ESXi hosts.

JPG/PNG, sometimes referred to as the adaptive encoder, is the original codec used by Blast and does software based encoding and decoding. While H.264 is the default, Blast will fall back to JPG/PNG when H.264 isn't option, such as when the HTML client is used from a non-chrome browser. It's also desirable when you have, "Images that require lossless compression," such as quality still images, complex fonts or medical imaging. However, the optimization guide is pretty clear that it's not so great for rapidly moving content, something the H.264 codec excels at.

H.265, referred to as High Efficiency Video Decoding (HEVC), is the bigger, badder successor to H.264. While it introduces bandwidth improvements, it absolutely requires the use of NVIDIA GRID GPUs on your ESXi hosts. It also requires clients with H.265 decode support, which is common nowadays but not guaranteed.

Finally, a new feature called Encoder Switcher allows Blast, "to dynamically switch between the JPG/PNG and H.264 codecs, depending on screen content type."

H.265, referred to as High Efficiency Video Decoding (HEVC), is the bigger, badder successor to H.264. While it introduces bandwidth improvements, it absolutely requires the use of NVIDIA GRID GPUs on your ESXi hosts. It also requires clients with H.265 decode support, which is common nowadays but not guaranteed.

Finally, a new feature called Encoder Switcher allows Blast, "to dynamically switch between the JPG/PNG and H.264 codecs, depending on screen content type."

Using Horizon Performance Tracker To Observer Codec Usage

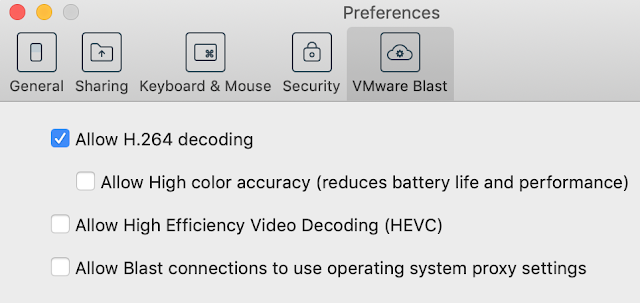

Regardless of which codec is best suited for your use case Horizon Performance Tracker can provide visibility into which one your session is actually using. To observe this in action we can control the codec selection using the VMware Blast settings on the Horizon client. Here's a screen shot of the codec settings from the Horizon client:

If you uncheck the option, "Allow H.264 decoding," you'll fall back to JPG/PNG and Performance Tracker will report, "adaptive", as the encoder. (Note: The Blast Extreme Display Protocol in VMware Horizon 7 clarifies that, "JPG/PNG is referred to as the adaptive encoder.")

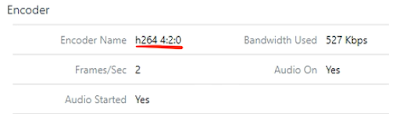

Whereas accepting the default of, "Allow H.264 decoding," under typical conditions, will cause Horizon Performance Tracker to report, "h264 4:2:0," as the encoder.

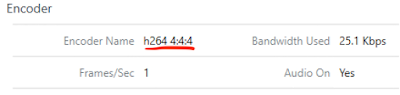

Should you select the option, "Allow high color accuracy," and H.264 is successfully implemented, the tool will report back, "h264 4:4:4," as the encoder name.

Further, if H.264 is enabled and there's an NVIDIA Grid card enabled for your VM, the tool reports back an encoder name of, "NVIDIA NvEnc H264." Here's an example from a GPU enabled VM in VMware's TestDrive environment:

Finally, to allow for use of H.265 when connecting to the same virtual desktop as detailed above, on the Horizon client I checked the box for, "Allow High Efficiency Video Decoding (HEVC)." With my client supporting H.265, Horizon Performance Tracker reports back that, "NVIDIA NvEnc HEV," as the encoder in use.

Observing Blast's Bandwidth Consumption In Real-Time

Roughly 5 and half years ago I had the honor of meeting the great Cale Fogel, Breaker Of Chains, Knower Of Things And Talker Of Straight. During some chit chat in the hallways of VMworld 2014 he summarized the situation with display protocols quite succinctly. "It's all about how much screen real estate you're dealing with, resolution, number of screens, versus the amount of changes on the screen. The more changes that occur and the higher the resolution, the more pixels that have to cross the wire and get reordered on the endpoint." So, if you have a single monitor with low resolution and a completely static screen, you'll have very few pixels to change and the protocol will gobble up very little in terms of compute resources and network bandwidth. On the other hand, if you have multiple monitors at high resolution, displaying a lot of active changing content, compute consumption will be high and bandwidth usage will be high.

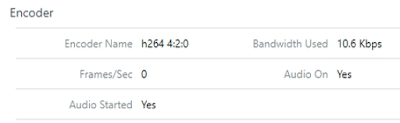

An easy way to see this first hand in real-time is through the Horizon Performance Tracker. Along with the nifty info we've discussed so far, it details how much bandwidth the display protocol is currently gobbling up. Under the encoder section, there's a field, "Bandwidth used." Reduce the screen resolution and do nothing within the VM, and you'll see the bandwidth usage plummet.

Only 10k of traffic generated by the Blast protocol, woo-hoo! However, don't get too excited haole. Within the same session, move the Horizon Performance Tracker utility itself around on the desktop, shaking it hard and violently like a chimpanzee on meth. Bandwidth will temporarily spike.

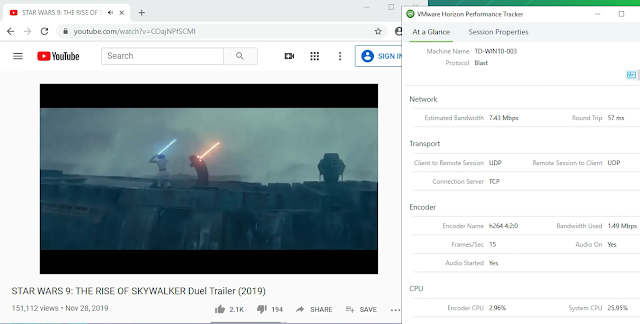

Now, for some real fun, fire up youtube, put in a trailer for Star Wars, increase the youtube resolution to high definition and then take a look at performance tracker.

When it comes to Horizon's display protocols, I like to say, the only way through is through. Lots of changes on the desktop translate to lots of compute and bandwidth usage. Fundamentally, it's more of a math problem than anything else. In the optimization guide, this dynamic is well articulated with the statement, "It is extremely important to recognize that optimizing for higher quality nearly always results in more system resources being used, not less. Except under very unique conditions, it is not possible to increase quality while limiting system resources." It goes on to elaborate on the inverse relationship between quality experience and optimized resource usage, stating, "Except in unique situations, optimizing quality increases bandwidth utilization, whereas optimizations for WANs require limiting quality to function over poor network conditions." So, you're going to have to be honest with yourself and pick your poison.

More Advanced Tuning Covered By The Optimization Guide

The optimization guide goes on to cover additional Blast tuning settings such as Max Session Bandwidth, Minimum Session Bandwidth and Frame Per Second. While Horizon Performance Tracker can assist with the configuration of these more advanced settings, before mucking around with them I'd circle your attention back to the VM, OS and underlying infrastructure. This isn’t to say that advanced Blast tuning methods are a waste of time. It’s just that in the absence of other information about your use case, holistically speaking, I'd say you’re more likely to have challenges with the user experience due to the VM and underlying infrastructure than due to advanced Blast tuning. The optimization guide echoes this sentiment, recommending that, “Before tuning Blast Extreme, it is critical to properly size and optimize the virtual desktops, Microsoft RDSH servers, and supporting infrastructure.” Remember, key processes behind Blast, VMBlastS.exe, VMBlastW.exe and VMBlastP.exe, are running WITHIN the OS of your virtual desktops. So if those VMs are under specced or starved for resources, your Blast processes will be starved and Blast performance is going to suck. Further, if critical apps within your VM are starved for resources no amount of tuning is going to make up for an app experience that's ruined before anythings even been remotely displayed. Along those lines, after confirming your VMs are properly specced, optimized and supported by your infrastructure, I'd recommend taking a hard second look at profile configuration, critical apps and the network paths those apps rely on. Often a poor user experience is the result of a deficiency outside the Horizon stack, with Horizon just being the messenger. And we all know what folks love to do to messengers.

So, in summary, when it comes to Blast tuning, to begin with I'd confirm you're getting the proper transport and codec selection. I'd also recommend being honest without yourself about the bandwidth requirements, use case requirements and network limitations. However, before doing a deep dive into the advance tuning of Blast, I'd take a very long, hard second look at the rest of your environment.